So what you want here is to take also the first derivative, and check the distribution of the first derivative. Given a string of length N, how many A chars should I expect in average, given my model (that can be the english distribution, or natural distribution)?īut then what about "abcdefg"? No repetition here, but this is not random at all. Comparing characters that are the same is exactly this in some way, but the generalization is to build a frequency table and check the distribution.

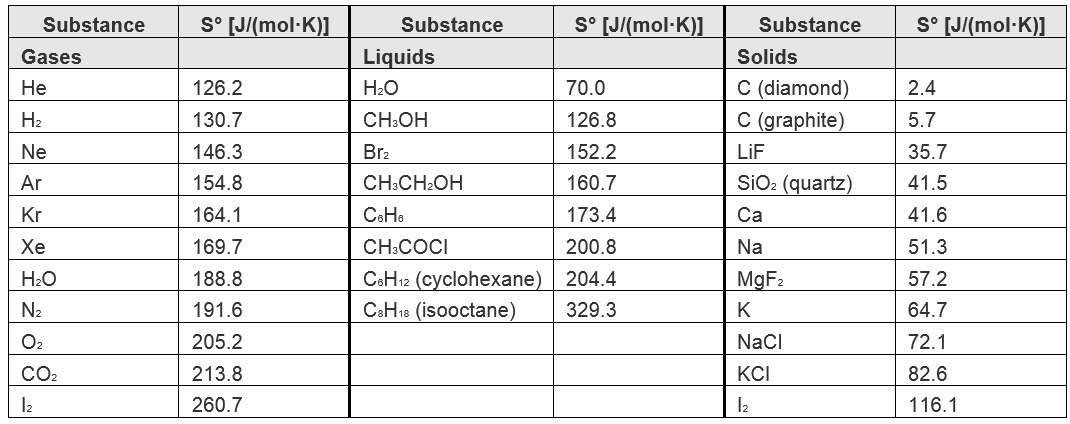

But I want to suggest to you a few things that can make a very simple but practical model. I'll not dig further in the math side, since I'm not an expert in the field. For instance the PI digits are well distributed, but actually is the entropy high? Not at all since the infinite sequence can be compressed into a small program calculating all the digits. In theory you can measure entropy only from the point of view of a given model. Result -= frequency * (Math.Log(frequency) / Math.Log(2)) Var frequency = (double)item.Value / len Public static double ShannonEntropy(string s) / returns bits of entropy represented in a given string, per It is a more "formal" calculation of entropy than simply counting letters: /// In this context, the term usually refers to the Shannon entropy, which quantifies the expected value of the information contained in a message, usually in units such as bits. In information theory, entropy is a measure of the uncertainty associated with a random variable. Click to read more on why password strength rules are not so great after all.I also came up with this, based on Shannon entropy. While initially designed in the efforts to reduce the risks of social engineering or dictionary attacks, it turns out that in many cases, this may cause a degradation in password strength. Examples include “at least one upper-case character”, “at least one symbol” etc. Many online password meters and registration forms complicate matters by imposing various arbitrary (and unfortunately non-random) restrictions on allowed patterns which may exist in a password. However, this formula would only apply to the simplest of cases. (assuming no capitalization variations are used) (assuming ASCII Printable Characters set) The following table illustrates some examples of entropy calculations of passwords of varying strength: Complexity

This can be expressed by extending the formula above:Įxpected Number of guesses (to have a 50% chance of guessing the password) = 2 Entropy-1 Examples We therefore tend to look at the expected number of guesses required which can be rephrased as how many guesses it takes to have a 50% chance of guessing the password. It is important to note that statistically, a brute force attack will not require guessing ALL of the possible combinations to eventually hit the right permutation.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed